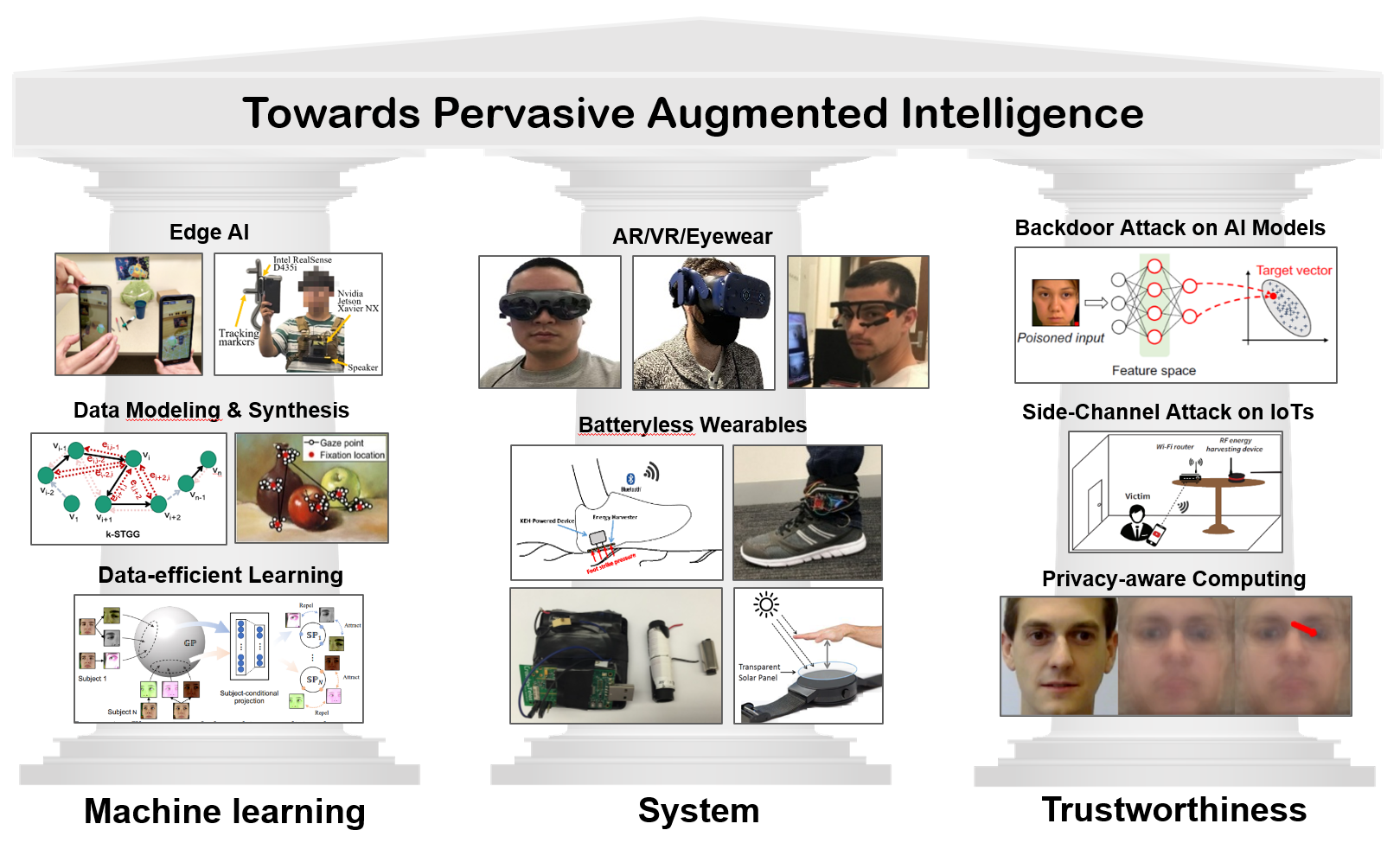

Pervasive Augmented Intelligence

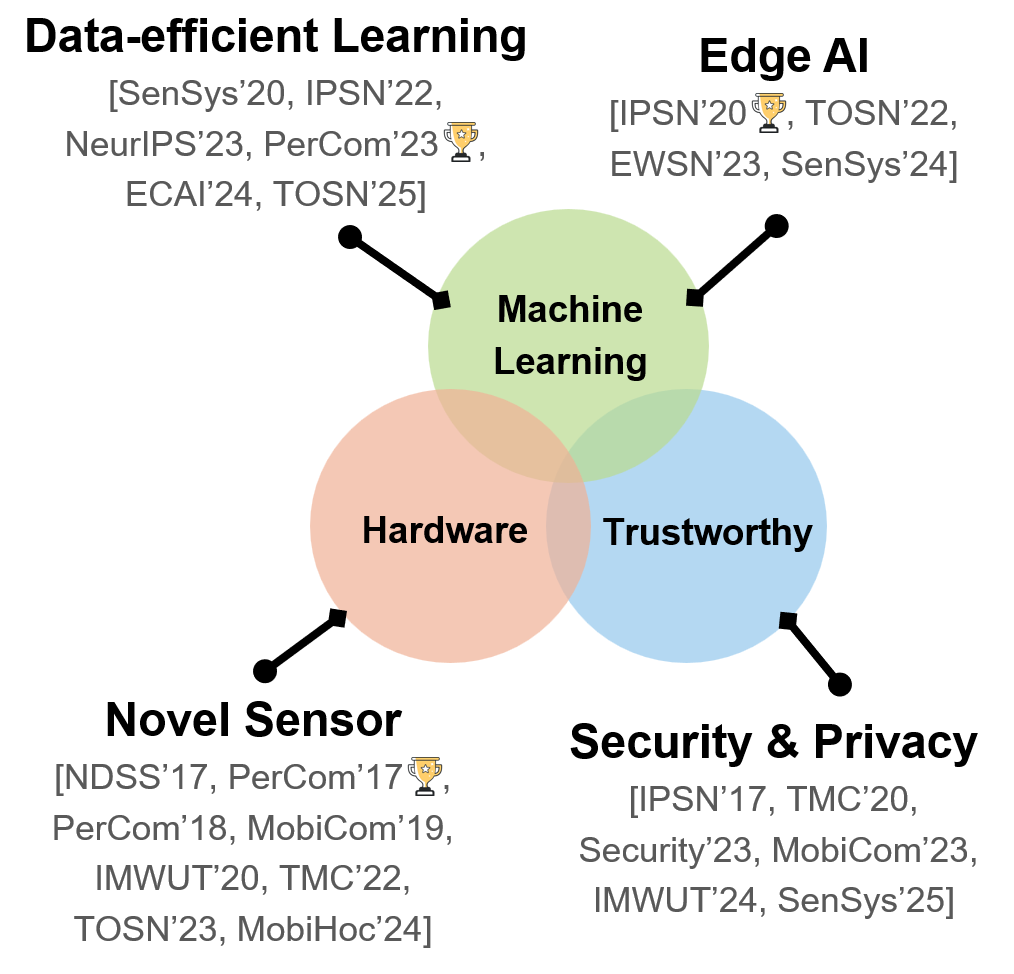

I lead the Pervasive Intelligent Systems Lab, where we design intelligent systems that can sense, learn, and adapt to augment and support human capabilities in healthcare, learning, and work. Our research lies at the intersection of pervasive systems, artificial intelligence, and human-centered sensing. Inspired by the vision of pervasive computing, we explore how computation and intelligence can be seamlessly embedded into the ambient environment and worn on the body to enable continuous, context-aware, and personalized support in everyday life.

We pursue this vision across the full stack, from novel sensing and hardware platforms, to edge and mobile systems, to AI algorithms and trustworthy deployment. The systems we build are designed not only to be capable, but also efficient, robust, and trustworthy in real-world use.

Holistic Sensing

Understanding people through multimodal signals that capture the user, the surrounding environment, and their interaction over time.

Efficient Intelligence

Designing sensing, learning, and system pipelines that work continuously under tight energy, latency, and resource constraints.

Trustworthy Deployment

Building AI systems that are privacy-preserving, secure, and robust enough for real-world human-centered applications.

Select a theme to explore representative projects

Resource-efficient AI for Pervasive Computing Systems

We develop resource-efficient machine learning methods for mobile sensing systems, with a particular focus on wearable eye-tracking platforms for cognitive context sensing. This line of research asks how intelligent sensing systems can remain effective under practical constraints in training data, computation, and deployment, especially when they must adapt to diverse users, devices, and real-world settings.

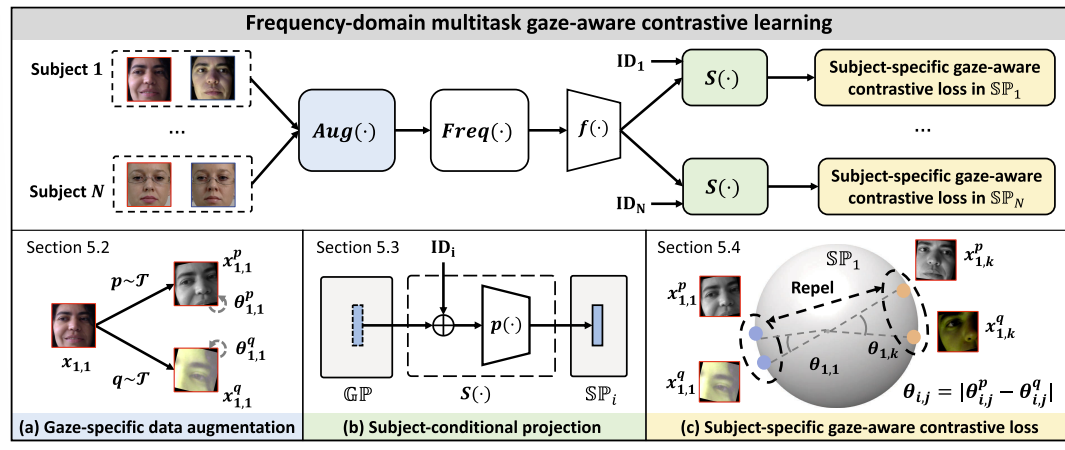

Resource-efficient Gaze Estimation via Frequency-domain Learning

Modern gaze estimation systems have benefited greatly from deep learning, but this progress often comes at a practical cost: high computational overhead and strong dependence on large-scale labeled gaze data. These challenges make deployment difficult on resource-constrained mobile and wearable platforms, where both inference efficiency and calibration cost matter.

In EfficientGaze work, we developed a resource-efficient framework for gaze representation learning that addresses both challenges jointly. At the core of the system is a frequency-domain gaze estimation pipeline, which exploits the spectral compaction property of the discrete cosine transform (DCT) to extract informative gaze representations with substantially lower computational cost. In parallel, we introduced a multi-task gaze-aware contrastive learning framework that learns gaze representations in an unsupervised manner, reducing the dependence on expensive manual gaze annotation while improving cross-subject generality. Extensive evaluation shows that EfficientGaze achieves gaze estimation performance comparable to existing supervised approaches while significantly improving efficiency, enabling up to 6.80× faster system calibration and 1.67× faster gaze estimation. This work demonstrates how principled representation design in the frequency domain can make gaze-based sensing more practical, scalable, and deployable for real-world mobile systems.

Efficient High-frequency Eye Tracking with Event Cameras

Conventional eye trackers are typically constrained by the fixed frame rate and bandwidth of CCD/CMOS cameras. Pushing these systems to very high sampling frequencies often leads to substantial sensing and downstream computational cost. In EV-Eye work, we rethink eye tracking from an efficiency perspective by leveraging event cameras, which emit signals only when pixel-level intensity changes occur. This event-driven sensing principle allows the system to focus computation on informative eye motion rather than repeatedly processing redundant full frames, making high-frequency tracking far more practical for resource-constrained wearable platforms.

Built on this idea, we developed a hybrid frame-event pipeline that combines low-rate near-eye grayscale images for robust pupil segmentation with asynchronous event streams for lightweight pupil updates. Rather than operating at a fixed maximum rate, the system updates adaptively based on how quickly informative events accumulate: it speeds up automatically during rapid eye movements such as saccades, and slows down when the eye is relatively stable. This design enables pupil tracking at up to 38.4 KHz while better utilizing limited computation, energy, and bandwidth.

Graph-based Few-shot Cognitive Context Sensing

Eye movements are tightly coupled with cognitive processes, making them a powerful sensing modality for inferring a person’s psychological and cognitive state. Yet, building robust gaze-based cognitive sensing systems is challenging because eye-movement data is highly heterogeneous across individuals, visual stimuli, and eye-tracking devices.

In our GazeGraph work, we developed a generalized deep learning framework that robustly recognizes a user’s cognitive context under such heterogeneity and rapidly adapts to unseen sensing scenarios with minimal training data. Leveraging graph-based modeling, we represent eye-movement trajectories as spatio-temporal gaze graphs, enabling deep models to jointly capture the structural and temporal dynamics of gaze behavior. On top of this representation, we introduced a few-shot graph learning module based on meta-learning, allowing fast and data-efficient adaptation to new users and new eye trackers. The system was demonstrated on the Magic Leap AR headset, showing how eye-movement-based cognitive inference can support context-aware immersive applications.

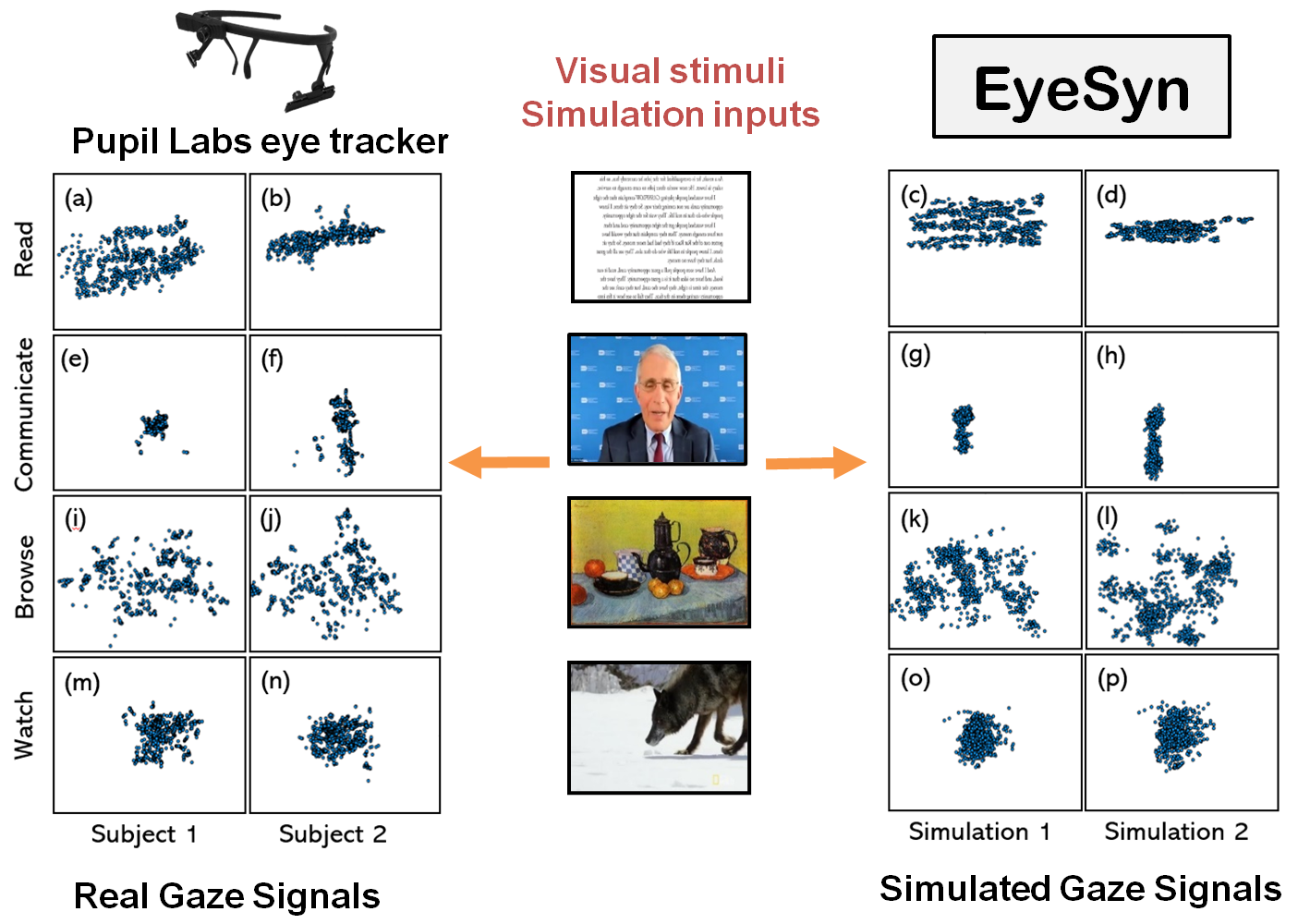

Psychology-inspired Generative Model for Eye Movement Synthesis

Gaze-based sensing also lacks diverse and sufficiently large eye-movement datasets for training. Conventional data augmentation approaches, such as GAN-based generation, themselves require large heterogeneous gaze datasets for training, which are rarely available in practice.

In EyeSyn work, we addressed this bottleneck by introducing a psychology-inspired gaze synthesis framework that eliminates the need for large-scale human gaze collection. Grounded in cognitive and behavioral science, EyeSyn synthesizes the gaze signals that an eye tracker would record during specific cognitive activities. Unlike conventional data collection, which is tied to particular lab setups, stimulus designs, and subject pools, EyeSyn can systematically simulate a wide range of eye-tracking configurations, including viewing distances, stimulus sizes, and sampling frequencies. EyeSyn faithfully reproduces characteristic human gaze patterns and captures diversity arising from different sensing setups and individual variability. It provides a practical tool for scaling, stress-testing, and improving gaze-based sensing systems when real annotated data is limited.

Selected Media Coverage

Our sponsors

Trustworthy AI for Pervasive Computing Systems

Building trustworthy AI for mobile systems poses significant research challenges, as these systems continuously collect sensitive user data and are increasingly deployed in safety- and privacy-critical settings. This line of research focuses on the security and privacy of mobile and wearable systems, with an emphasis on understanding emerging attack surfaces and developing practical defenses that preserve usability.

Privacy Preserving Mobile Eye Tracking

Eye tracking has become increasingly accessible on mobile devices, where tablets and smartphones can support gaze estimation either through cloud-based services or local software. In both cases, however, users typically need to share full-face images with the service provider, which creates substantial privacy risks if these images are collected to infer sensitive attributes.

In our PrivateGaze work, supported by a Meta Faculty Research Award, we developed the first method to preserve user privacy in black-box mobile gaze-tracking services without sacrificing gaze estimation accuracy. The framework deploys a privacy-preserving module directly on the user’s mobile device to transform sensitive full-face images into obfuscated versions before they are shared with the service. These privacy-enhanced images remove sensitive cues such as identity and gender while retaining gaze-related information needed for accurate eye tracking. Our method effectively defends against unauthorized sensitive-attribute inference while maintaining gaze estimation performance comparable to conventional full-face inputs.

Backdoor Attacks on Mobile Eye Tracking

Building trustworthy mobile AI requires more than strong predictive performance; it also demands robustness against hidden and adversarial failures introduced during model development. In gaze-enabled mobile systems, models are often trained on unverified public datasets or outsourced to external providers, creating serious security risks. One particularly concerning threat is the backdoor attack, in which malicious triggers such as facial accessories or digital patches are covertly injected during training, causing the model to behave normally under benign conditions but produce attacker-controlled outputs when triggered. Such vulnerabilities can have severe consequences in safety-critical applications, including driver monitoring and human–machine interaction.

To address this challenge, we developed SecureGaze, the first system designed to detect and mitigate backdoor attacks on gaze estimation models, establishing an important step toward secure and trustworthy eye-tracking systems. SecureGaze is a practical and lightweight system, requiring only a small benign dataset to detect and mitigate attacks while substantially outperforming adapted defenses from standard classification settings.

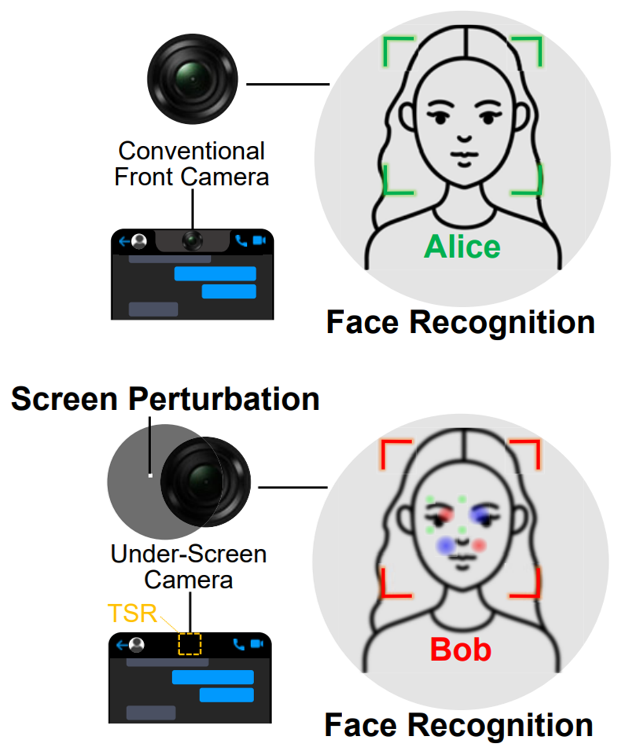

Adversarial Attack with Under-screen Camera

As smartphone vendors increasingly adopt bezel-less displays, under-screen cameras (USCs) are becoming an important new sensing interface. In ScreenPerturbation, we show that the translucent screen region above the camera can be turned into a security and privacy control. By subtly modulating screen pixels in this region, we generate perturbations that are imperceptible to users yet highly disruptive to USC images.

Based on a physics-grounded imaging model of under-screen cameras, we developed two algorithms that manipulate screen pixels to produce strong image perturbations while preserving visual quality. Experiments on three commercial USC smartphones show that these perturbations reliably degrade the performance of state-of-the-art image classification and face recognition models. This enables privacy protection or the intentional disabling of camera functionality in malicious or untrusted scenarios. To the best of our knowledge, this is the first work to systematically expose the security and privacy implications of emerging under-screen camera systems.

Selected Media Coverage

Energy-efficient IoT Sensing and Communication

This research direction investigates how to build sustainable, self-powered sensing systems by rethinking the relationship between energy harvesting, sensing, and communication.

Energy-efficient Sensing and Communication for Self-powered IoT Systems

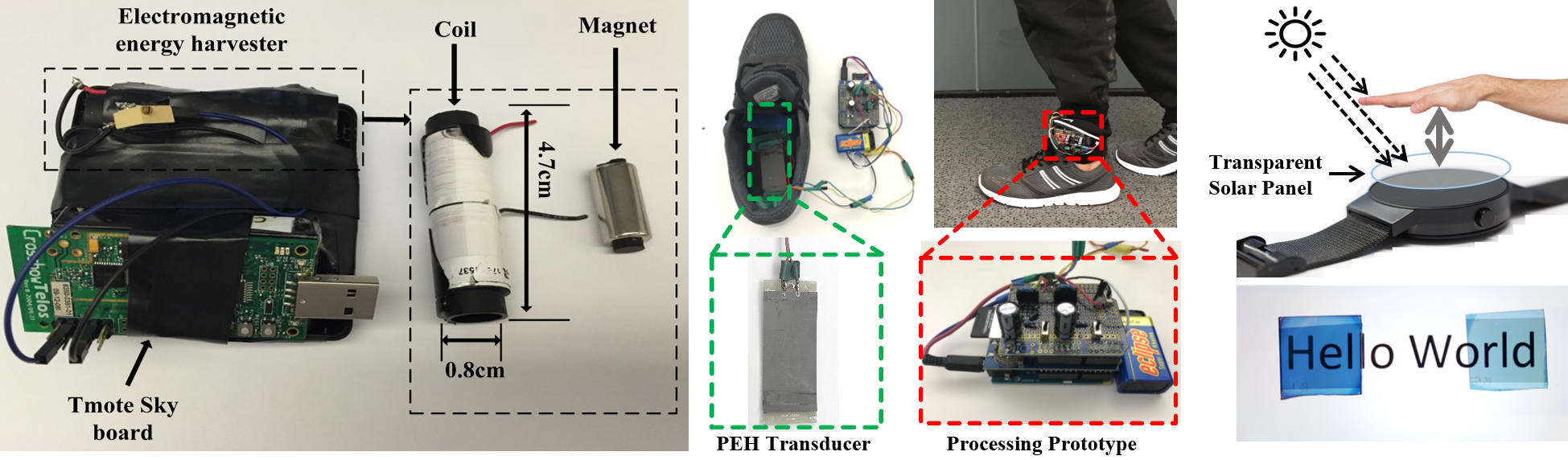

Self-powered IoT systems harvest energy from ambient sources such as light and motion to power their operation. Compared with conventional battery-powered devices, energy harvesting offers a more sustainable alternative. Yet, enabling perpetual operation remains highly challenging because harvested ambient energy is intermittent and unstable. At the same time, many IoT applications rely on power-hungry sensors for context sensing, which quickly deplete the limited energy budget.

Our research introduced a new paradigm that leverages the energy-harvesting signal itself as a sensing modality. The key insight is that harvested energy inherently encodes physical context associated with the energy transformation process. For example, the electrical output of a kinetic transducer reflects the motion of its source, including characteristics such as velocity and acceleration. This idea turns energy transducers into perpetual, energy-positive sensors that both generate energy and provide information about context. A major challenge, however, is that energy transducers are coarse and indirect sensing elements rather than precision measurement devices. Extracting meaningful information from raw harvested signals, whether kinetic, solar, or RF-based, requires rethinking traditional sensing pipelines. To address this, we developed:

- Signal processing and learning methods for noisy harvested signals [TMC'17] [NDSS'17] [PerCom'17] [PerCom'18] [Computer'18] [MobiCom'19] [TMC'19] [IMWUT'20] [T-ITS'20].

- A sampling strategy that reduces microcontroller wake-up time by 100X [TIOT'20].

- Hardware designs that enable simultaneous energy harvesting and sensing with a single transducer [IoTDI'18] [TMC'22].

These contributions enabled a broad range of self-powered applications, including activity recognition, gait-based authentication, and vibration-based communication, highlighting the versatility of this sensing paradigm. Follow-up work on radio-frequency energy harvesting can be found in [Security'23] and [MobiHoc'24].

Research Impact

This line of work received broad recognition, including the 2017 New South Wales State Research & Development Project of the Year, the 2017 New South Wales State Mobility Innovation of the Year, and the Metric Recipient of the 2017 Australia National Research & Development Project of the Year. These awards were presented by the Australian Information Industry Association, recognizing excellence in Australian innovation with strong potential to create positive impact for the community. This line of research has attracted attention beyond academia and has been featured by public media and innovation communities, reflecting its broader societal and technological impact.

Selected Media Coverage

Sponsors